Moving valuable data and workload to the cloud requires careful thought and consideration to ensure that the data gets the durability and availability that best fits the use case. There is no magic cloud button to ensure 100% durability and availability — not even in the most expensive solutions. But have no fear, there is hope.

“We moved it to the cloud! How did we lose our data? We thought the cloud was automatically redundant and infinitely durable!” Anyone who has worked in the public cloud segment for any amount of time has heard these questions. The truth is that moving valuable data and workload to the cloud requires careful thought and consideration to ensure that the data gets the durability and availability that best fits the use case. There is no magic cloud button to ensure 100% durability and availability — not even in the most expensive solutions. But have no fear, there is hope. To illustrate the importance of durability and availability, we will use the largest cloud provider, Amazon Web Services (AWS), as an example.

Durability vs. Availability

So, what’s the difference between durability and availability anyway? Durability is often referred to as the probability that you will be able to retrieve your data from the storage system at hand when you need it. In AWS, most storage tiers have what is often called the “nine 9s” of durability, meaning 99.999999999% durability over the course of a year. In other words, for every 10,000 files or objects stored, you can expect to lose only one of them to corruption or other issues each year. Pretty good, right? If only we could expect that kind of durability from our cars. Availability, on the other hand, is a bit of a different story.

Availability is better referred to as the probability that you can retrieve your data immediately when you need it. Most AWS storage services offer 99.99% availability, so there is a .01% chance that you won’t be able to access your data immediately, but as mentioned before, there is a much lower chance that the data won’t actually be intact upon arrival.

To differentiate between the two concepts, in simple terms, availability is the speed at which data arrives, while durability is how safely the data will get to you without corruption. Availability must be built and is inherently important.

Architecting High Availability

For this discussion, we will focus on Amazon’s object storage solution, Simple Storage Service (S3), with the goal of understanding the basics. Most tiers of Amazon S3 store your data on multiple devices, across what Amazon refers to as multiple Availability Zones (AZs). Think of Availability Zones as independent locations, but all in the same region. If the server hosting your data in one location (AZ) goes down, there will be two other locations ready to pick up the slack immediately.

The tricky part here is that MOST of the S3 services store data in three AZs; however, some less-expensive tiers only store data in one AZ. This is where planning comes into play. Often, the assumption that “cloud is automatically redundant” causes issues when the incorrect tier or service is chosen. Sadly, most organizations don’t find this out until it’s time to retrieve their data. While some data is highly critical, other data can wait to be retrieved for a few hours or even a few days.

So, is that it? Just choose a storage tier that uses multiple AZs? The short answer is probably not; rather, it is dependent on just how critical access to the particular dataset is. Let’s say there’s a natural disaster, such as a flood, earthquake, or tornado, and all three of the AZs go down? Now what? This is where the previously mentioned regions come into play. If the data is located in California, we can also replicate the data somewhere less earthquake prone, such as Virginia. We now have the data stored in six Availability Zones, across two regions, which, of course, will double the cost of data storage.

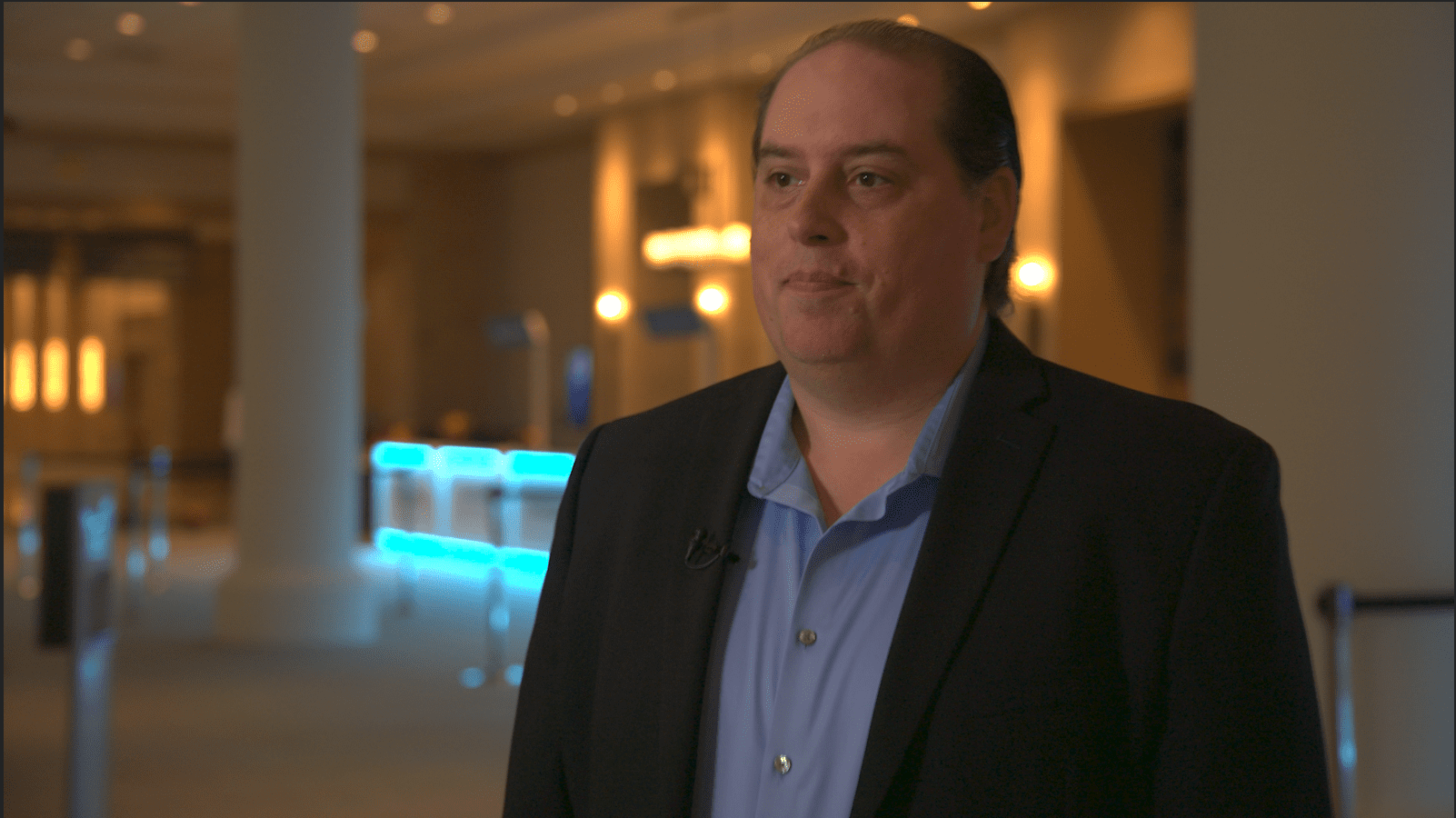

While many of the planning techniques and features of object storage carry over to operating system data, it is highly critical, when moving workload to the cloud, that it be carefully architected and vetted, usually by a third party like Presidio, to ensure availability, durability, and price, to name just a few considerations.

Learn more about Presidio Cloud Solutions.