In today’s world, computer systems must be reliable. With more and more businesses relying on technology, any downtime can result in significant financial losses. That’s why developers need to ensure their applications can withstand inevitable failures in distributed systems. One powerful tool in their arsenal is Exponential Backoff with Jitter.

What is Exponential Backoff?

Exponential Backoff is a technique that allows an application to retry an operation that has failed, with progressively increasing wait time between retries. With each failure, the application increases the wait time exponentially. This allows the system to recover from any transient failures that may cause the issue. Also, the approach ensures that the application doesn’t flood the system with retries and potentially make the problem more severe.

Example:

Let’s say you have a script that makes an API call to a service, that may occasionally return an error due to network connectivity issues or throttling. You would want to implement a retry strategy that retries the API call up to 5 times with an increasing delay time.

Here’s how to implement the Exponential Backoff strategy:

| Retry Attempt | Delay Time (seconds) |

|---|---|

| 1 | 1.0 |

| 2 | 2.0 |

| 3 | 4.0 |

| 4 | 8.0 |

| 5 | 16.0 |

As you can see, the delay time starts at a base value of 1.0 seconds and doubles with each retry attempt. This gives the service enough time to recover from the error and reduces retry storms.

However, using this strategy alone may still result in all retries happening at the same time, potentially overloading the service, and causing more problems. That’s where Exponential Backoff with Jitter comes in.

What is Exponential Backoff with Jitter?

It is a technique used for retrying failed operations in distributed systems. It involves gradually increasing the delay between retry attempts, starting small and growing exponentially, until a maximum delay is reached. This approach reduces system load and prevents overwhelmingly excessive retries.

However, scheduling all retries at the same time can still cause spikes in system load and further failures. To mitigate this, Jitter is introduced, which adds random variation to the delay between retry attempts. This helps spread out retries and avoids system load spikes.

Using Exponential Backoff with Jitter is beneficial for handling transient failures, such as network errors or service throttling. It allows the system time to recover and resolve the issues. Additionally, by reducing system load and avoiding spikes through Jitter, system failure risk can be minimized.

Example:

With Exponential Backoff with Jitter, you add some randomness to the delay time by introducing a random delay, or “jitter”, to the next retry delay time. This ensures that the retries are not synchronous and reduces the likelihood of a retry storm. Here’s how the table would look with exponential backoff with Jitter:

| Retry Attempt | Delay Time (seconds) | Jitter Range (seconds) | Actual Delay Time (seconds) |

|---|---|---|---|

| 1 | 1.0 | 0.5 | 1.0 – 1.5 |

| 2 | 2.0 | 0.5 | 1.5 – 2.5 |

| 3 | 4.0 | 0.5 | 3.5 – 4.5 |

| 4 | 8.0 | 0.5 | 7.5 – 8.5 |

| 5 | 16.0 | 0.5 | 15.5 – 16.5 |

As you can see, the actual delay time varies slightly due to the introduced jitter. This reduces the likelihood of retries happening simultaneously and prevents overloading the service.

Using Exponential Backoff with Jitter can make your application more resilient and reliable, handling transient errors gracefully and improving the user experience.

Implementing Exponential Backoff with Jitter in AWS S3 Service

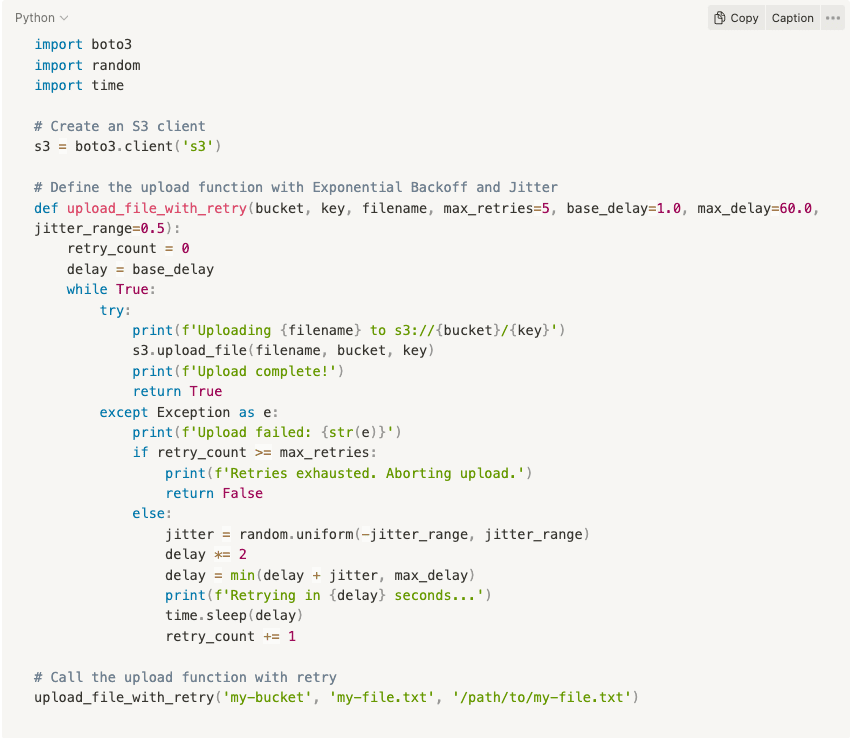

Let’s say you have a Python script that uploads a file to an S3 bucket using the Boto3 library. Sometimes, the upload may fail due to network issues or other transient failures. You would want to implement Exponential Backoff with Jitter to retry the upload in case of failures.

Here’s how to modify the script to implement Exponential Backoff with Jitter:

Below is how the modified script works:

- The upload_file_with_retry function takes the S3 bucket name, key (file path), local file name, and optional parameters for retry settings.

- The function has a while loop that keeps uploading the file until successful or the maximum number of retries are reached.

- If an upload attempt fails, the function prints an error message and calculates the next retry delay. The delay starts with the base delay (1.0 seconds in this example) and doubles with each retry until it reaches the maximum delay (60.0 seconds in this example).

- To introduce Jitter, the function generates a random float number between the negative and positive jitter range (0.5 seconds in this example) and adds it to the next retry delay. This ensures that the actual delay varies slightly and reduces the chance of retries happening simultaneously.

- The function sleeps for the calculated delay before the next retry.

Implementation Benefits

With this implementation, your script can handle transient failures during S3 uploads and automatically retry the operation with Exponential Backoff and Jitter. You can adjust the retry settings according to your use case to balance between retry attempts and the duration of the upload process. Furthermore, implementing exponential backoff with Jitter can also prevent your application from being blacklisted by the service due to excessive retries. Many services have rate-limiting mechanisms in place to prevent abuse – and makes retrying too often and too quickly trigger those mechanisms and cause your IP or account to be temporarily blocked. By using this technique, you can minimize the chances of hitting those rate limits and avoid being blocked. In addition, you can still retry failed requests and eventually succeed. While there are many ways to implement exponential backoff, there are also many packages available that can simplify the process for developers.

Packages for Developers

For example, Python’s Retry library provides a simple and easy-to-use interface for implementing retry behavior with configurable backoff settings. Similarly, the Exponential Backoff package for Node.js offers a similar interface for JavaScript developers, and .NET has Polly.By using these packages, developers can easily implement exponential backoff in their applications without having to worry about the details of the algorithm.

Conclusion

In summary, Exponential Backoff with Jitter is a powerful tool for improving your application’s reliability and resilience when making API calls or communicating with external services over the network. By introducing randomness to the delay time between retries, you can avoid synchronous retries, thereby reducing the chances of overloading the service or triggering rate-limiting mechanisms. Also, by gradually increasing the delay time with each retry, you can give the service enough time to recover from transient errors. This will increase the likelihood of success in the long run.