At the April 2023 RSA Conference, Generative Artificial Intelligence (GenAI) was the one of the hottest topics on show floor at the Moscone Center in San Francisco. In fact, I am in a growing group of cybersecurity experts who believe that GenAI is taking the cybersecurity industry by storm.

There is no doubt that wherever you travel around the wider tech world in the Spring of 2023, GenAI is a hot topic. For example, consider these headlines:

DigitalTrends.com — ChatGPT: How to use the AI chatbot that’s changing everything: “ChatGPT has continued to dazzle the internet with AI-generated content, morphing from a novel chatbot into a piece of technology that is driving the next era of innovation.

Wall Street Journal (WSJ.com) — Wendy’s, Google Train Next-Generation Order Taker: an AI Chatbot: “Wendy’s is automating its drive-through service using an artificial-intelligence chatbot powered by natural-language software developed by Google and trained to understand the myriad ways customers order off the menu.

“With the move, Wendy’s is joining an expanding group of companies that are leaning on generative AI for growth.

Vox – Why Google is reinventing the internet search Generative AI is here. Let’s hope we’re ready.

“If you feel like you’ve been hearing a lot about generative AI, you’re not wrong. After a generative AI tool called ChatGPT went viral a few months ago, it seems everyone in Silicon Valley is trying to find a use for this new technology. Microsoft and Google are chief among them, and they’re racing to reinvent how we use computers. But first, they’re reinventing how we search the internet.”

GenAI and Cybersecurity

But sadly, this amazing technology is also being taken-up by bad actors, just as fast as we learn about new innovative developments. Consider these headline stories:

CRN: Generative AI Is Going Viral In Cybersecurity. Data Is The Key To Making It Useful.

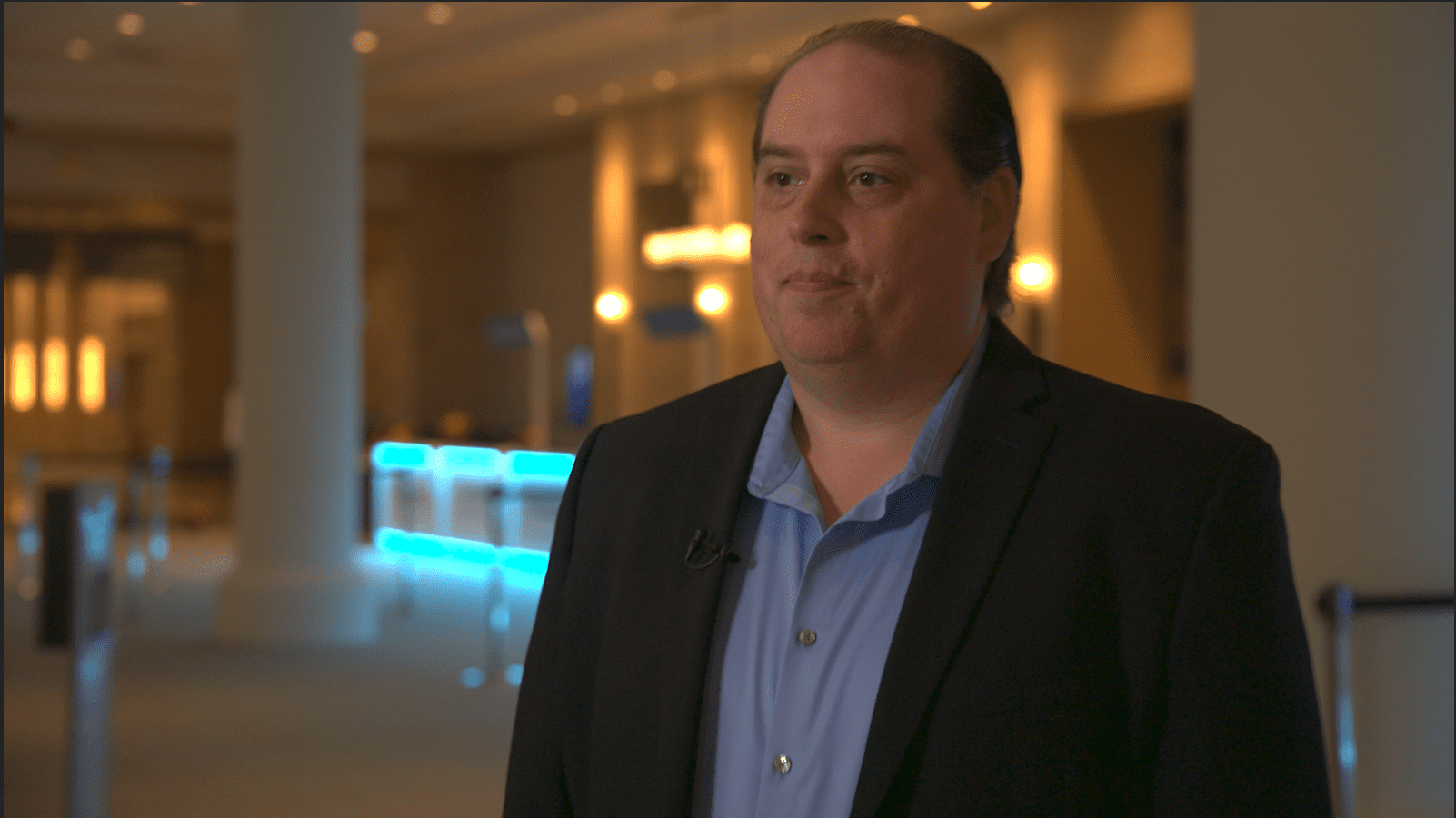

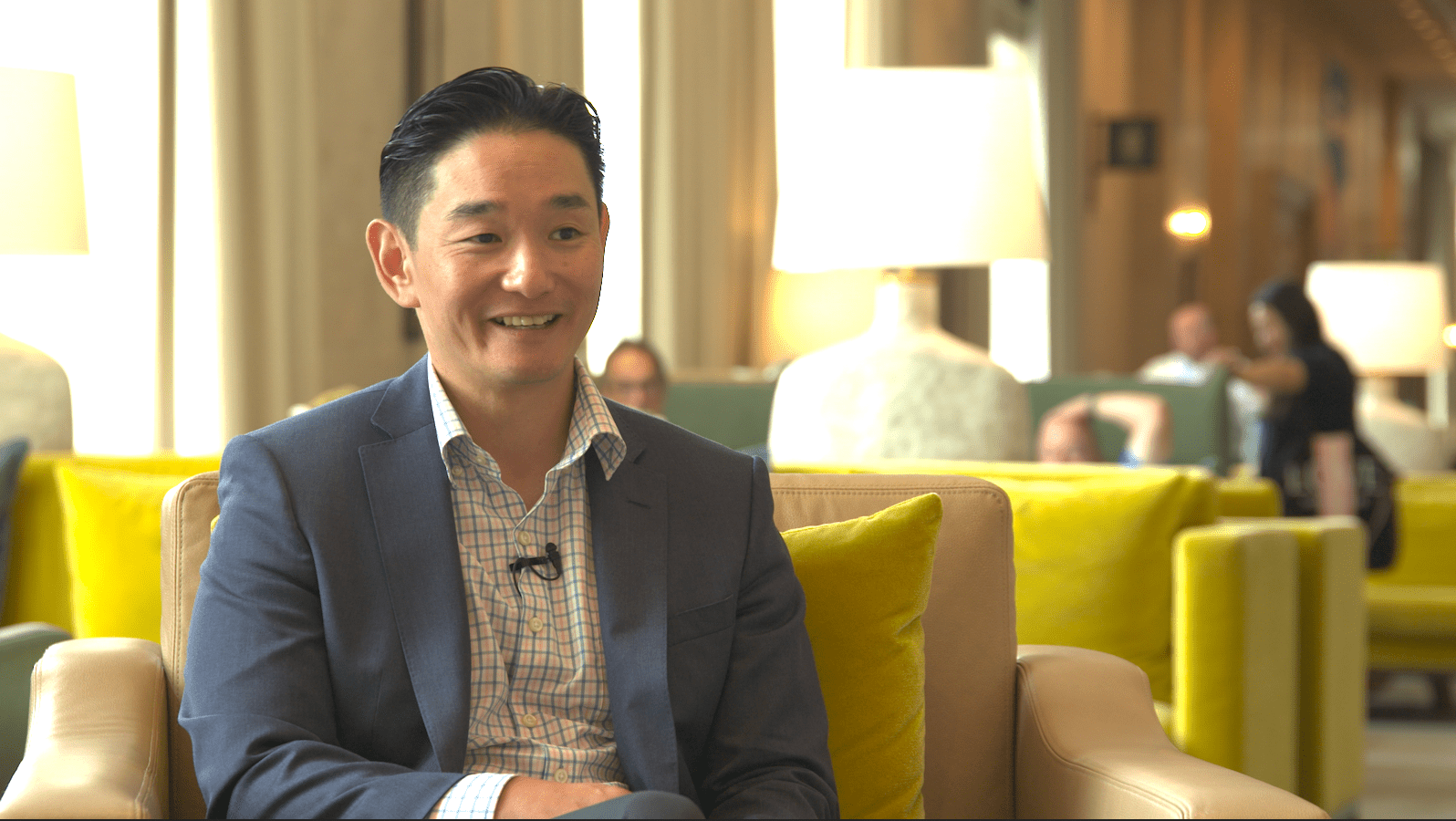

“The rush for cybersecurity vendors to tap into generative AI is in full swing — as anyone who attended last week’s RSA Conference, and perused the many booths touting the technology, will confirm.

When it comes to generative AI and security products, “everybody’s putting it in — whether it’s meaningful or not,” said Ryan LaSalle, a senior managing director and North America lead for Accenture Security, during an interview at RSAC 2023.”

Fox Business: FTC issues warning on misuse of biometric info amid rise of generative AI

“A policy statement published by the FTC last week warned that the increasingly pervasive use of consumers’ biometric data, including by technologies powered by machine learning and AI, poses risks to consumers’ privacy and data. Biometric information is data that depicts or describes physical, biological or behavioral traits and characteristics, including measurements of an identified person’s body or a person’s voiceprint. …”

Venturebeat: Forrester predicts 2023’s top cybersecurity threats: From generative AI to geopolitical tensions

“Using generative AI, ChatGPT and the large language models supporting them, attackers can scale attacks at levels of speed and complexity not possible before. Forrester predicts use cases will continue to proliferate, limited only by attackers’ creativity. …”

Three Cyberattack Vectors To Watch As GenAI is Used Against Us

So getting back to basics, what are a few of the top areas that are of concern as criminals attempt to take advantage of the new GenAI trends? Here are three areas to watch-out for as a new generation of cyberattack trends emerge that may be using vectors we have already identified.

1. Online Fraud

Stories are rampant that feature ‘voice clones’ that sound just like someone who know and trust. For example: AI ‘voice clone’ scams increasingly hitting elderly Americans, senators warn. “Generative artificial intelligence systems are already making it easier for scammers to con elderly Americans out of their money, and several senators are asking the Biden administration to step in and protect people from this quickly emerging threat.”

2. Phishing With GenAI

Most of us have been trained to watch out for fake emails and more. We ask: Who is this message really coming from?

But, that intruder email identification is getting more difficult with a new generation of fake messages and emails emerge. Consider this article from CNET: It’s Scary Easy to Use ChatGPT to Write Phishing Emails

“For the record, ChatGPT didn’t write me a phishing email when I asked it to. In fact, it gave me a very stern lecture about why phishing is bad. …

“That said, the free artificial intelligence tool that’s taken the world by storm didn’t have a problem writing a very convincing tech support note addressed to my editor asking him to immediately download and install an included update to his computer’s operating system. (I didn’t actually send it, for fear of invoking the wrath of my company’s IT department.). …”

3. Spreading Misinformation

For reference, see this article on 5 Ways Hackers Will Use ChatGPT For Cyberattacks. Point number five highlights this: “The recent discovery of a fake ChatGPT Chrome browser extension that hijacks Facebook accounts and creates rogue admin accounts is just one example of how cybercriminals exploit the popularity of OpenAI’s ChatGPT to distribute malware and spread misinformation.

“The extension, promoted through Facebook-sponsored posts and installed 2,000 times per day since March 3, was engineered to harvest Facebook account data using an already active, authenticated session. Hackers used two bogus Facebook applications to maintain backdoor access and obtain full control of the target profiles. Once compromised, these accounts were used for advertising the malware, allowing it to propagate further.”

Final Thoughts

No, I don’t think any of us should be “throwing out the baby with the bathwater,” and avoiding GenAI because of the potential to do harm with these new cyberattacks. In fact, any technology toolset can be used for positive or negative purposes.

Nevertheless, we must be aware that these new GenAI tools are (and will be) used to supercharge cyberattacks against us. GenAI has tremendous potential for good, and we will need to use GenAI technologies and tools to fight cybercrime and “fight fire with fire” to defend enterprises moving forward.

This means GenAI will become part of the new normal in cyberdefense tools and programs. Solutions require answers for people, processes and technology, so new training on GenAI is a must for all of us. The first step is awareness.