Introduction

In the last decade, tools to automate software and hardware deployment pipelines have become mainstream. This opened the door to several new automation streams for deployment of on-premise / off-premise machines, and their software. What allowed for huge progress in IT and cloud automation, also created management issues for OS versions, the software installed, and environment versioning. In this blog, we will discuss regarding a potential solution for these problems.

Problem Statement

Organizations have machine images with many OS types and flavors that require latest updates and security patches on a regular basis. Currently, this includes manual updates, uploading new images and instructing the users to update their OS/applications. With the use of multiple providers, the challenge increases as the image types, flavors, and locations change.

Customer Requirement

- Cloud and Operating System agnostic

- Build images based off software requirements

- Utilize GitLab CI as the CI/CD tool and code repository

- Allow ease of package/image update

- Controlled access and management of secrets

- Support both on and off-premise

- Allow for versioning of images

Solution

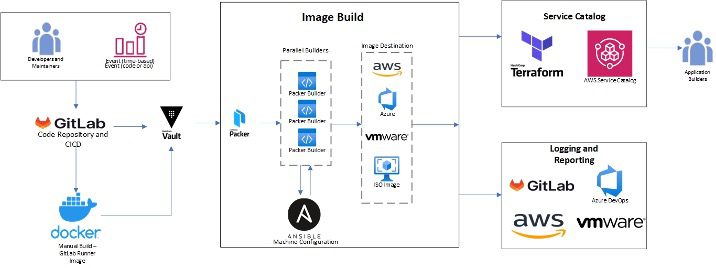

We have developed an automated solution to build standardized machine images. This solution will incorporate Packer, Ansible, Vault, Terraform, and GitLab to promote the portability of images across multi-cloud and on-premise environments such as AWS, VMware, Azure, etc. In this example, we focus on AWS and VMware, although other clouds and CI/CD tools can be used as well.

Process – Workflow

- GitLab CI is the CI/CD and orchestrator tool of this process.2 It is kicked off with a push of the .gitlab-ci.yml, a manual run, or an API call. If done manually, the Docker image is built and pushed to a public or private repository. This runner need not be updated, unless a newer version of the runner tool is needed (Terraform, Ansible, Packer, Python, etc.). Next, the runner will validate its access to Vault as its secret manager as well as running the Packer’s built-in validator on each image file. This ensures that the build would not begin if there is an error in the file.

- Then, Packer is called to begin building all the image files (.pkr.hcl). While all images are built in parallel, there is a separate build step for both private and public cloud for code readability and management. Packer will leverage a HashiCorp Vault instance for all required secrets.3 This includes any secrets for Ansible that can be passed through the provisioner where Ansible is called.

- When the machine provisioning is nearing completion, Packer will call upon the required Ansible role(s) contained from within the repository. This will run all the required configurations on the machine images before Packer reports a successful run.4

- The final step is for Packer to move the image to the final location(s) specified within its configuration file.5,6 This allows GitLab CI to report a successful pipeline.

Challenges Involved

- We faced multiple issues while starting with Alpine as the base image in terms of compatibility with the Vault version and GitLab runner. Eventually, we used Ubuntu 20.04 which allowed for simpler compatibility between installed technologies.

- HashiCorp Vault on our Gitlab runner threw errors, until we added RUN setcap cap_ipc_lock= /usr/bin/vault in the Dockerfile.1

- Image Tags are not copied to other regions / accounts in AWS. This would normally happen when packer completes an AMI and transfers it to the other accounts / regions by enabling the share permissions. AWS Support has informed that this is a security feature of AWS and will not change anytime soon. Due to this, versioning tags in AWS are more complicated than other image destinations and must be included in the image name or in a secondary management step.7

References

1 – https://github.com/hashicorp/vault/issues/10048#issuecomment-700779263

2 – https://docs.gitlab.com/ee/ci/

3 – https://www.vaultproject.io/docs

4 – https://www.packer.io/plugins/provisioners/ansible/ansible

5 – https://www.packer.io/plugins/builders/vmware

6 – https://www.packer.io/plugins/builders/amazon

7 – https://docs.aws.amazon.com/AWSEC2/latest/UserGuide/Using_Tags.html#tag-restrictions